A security vendor called Wiz has published a state-of-PQC report this week with a line in it that should make anyone who has actually migrated anything ever in IT spit their coffee out with disgust.

Can these guys get any more tone-deaf and arrogant?

Session negotiation key exchange for both TLS and SSH is a “solved problem” in that it has been implemented broadly now and just needs software to be updated…

Solved. Post quantum is done everyone. You can go home now.

Why? The explanation given is that key exchange has been implemented broadly for two protocols, and now it “just needs software to be updated“.

Just needs software to be updated. Hmmm.

When in the entire history of the Internet has that phrase meant something has already been solved? Updating software is the WHOLE PROBLEM. It comes right after “just needs hardware to be updated.” Where’s my wrench to throw at my screen?

This is, for the record, the same ridiculous Wiz crew I’ve written about before. This is who packaged an unauthorized intrusion as “research” fully aware that’s unethical, with the hallmarks of military-intelligence tactics dressed up as a blog post. It’s the same crew whose handling of a Microsoft AI data leak raised more questions than it answered. There’s a pattern here, and it’s not about integrity. It’s about presentation far more than engineering. The PQC report is disinformation in the same genre: an incomplete migration, dressed up as done.

But wait, there’s more. Never forget that Wiz, per Orca’s filed complaint, built a scanning architecture around lifting point-in-time snapshots out of customer environments by copying Orca’s “MRI” pitch almost word-for-word while doing it.

I could go on about their super shady past, but let’s dig in here, because you know I’m a glutton for integrity breach response.

The standard for post-quantum key exchange, ML-KEM, exists. OpenSSL 3.5 ships it. Go’s crypto/tls defaults to it. The standard is cooked, the code is merged, and so the problem is declared closed — when it is nothing of the sort. Everything after “the library can do it” is the Sisyphean reality of rollout, and large organizations balk and tremble at the mere thought of rolling anything out.

For perspective: I once managed fifteen teams on a weekly cadence for twelve months just to deprecate TLS 1.0, and that was a small SaaS. One protocol version, off, one small startup with a new technology. A year of grueling meetings.

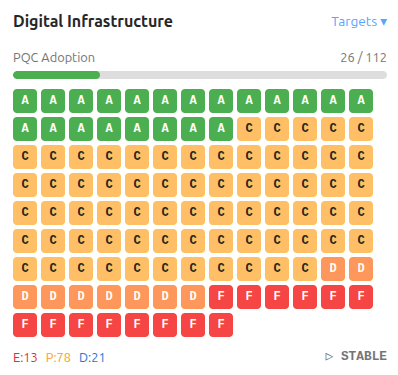

And here’s the real kicker. In the same Wiz report, a few paragraphs down, they print the numbers that bury their own “solved” claim. Less than 15% of OpenSSL instances are even capable of PQC. 4.4% of OpenSSH installs are on a version new enough to negotiate it. Of the TLS 1.3 connections their own sensor watches, 15% are using a quantum-resistant key exchange. A full year into the easiest, best-supported, most-shipped half of the entire migration, that’s the field result.

If it were solved, those numbers would be high. They are low. The gap between “the library Wiz gets paid to see can do it” and “the wire is doing it” is not a rounding error that closes on its own. That gap is the actual rollout. It is the whole job. Calling it solved is how you advertise that you’ve never had to solve it.

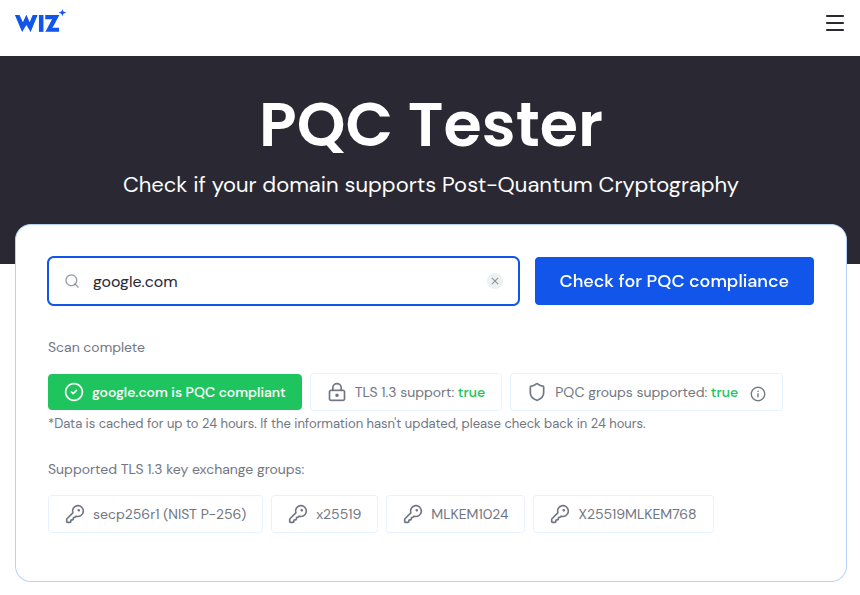

If you want to test the “solved” propaganda, look at how the scans of Wiz compare against other scanners.

Wiz tossed out a free PQC Tester. Point it at google.com and it lights up all green: “google.com is PQC compliant.” TLS 1.3, true. PQC groups supported, true. X25519MLKEM768 right there in the list. Done. Solved. Go tell the board you should forklift Wiz their bazillion dollars in fees.

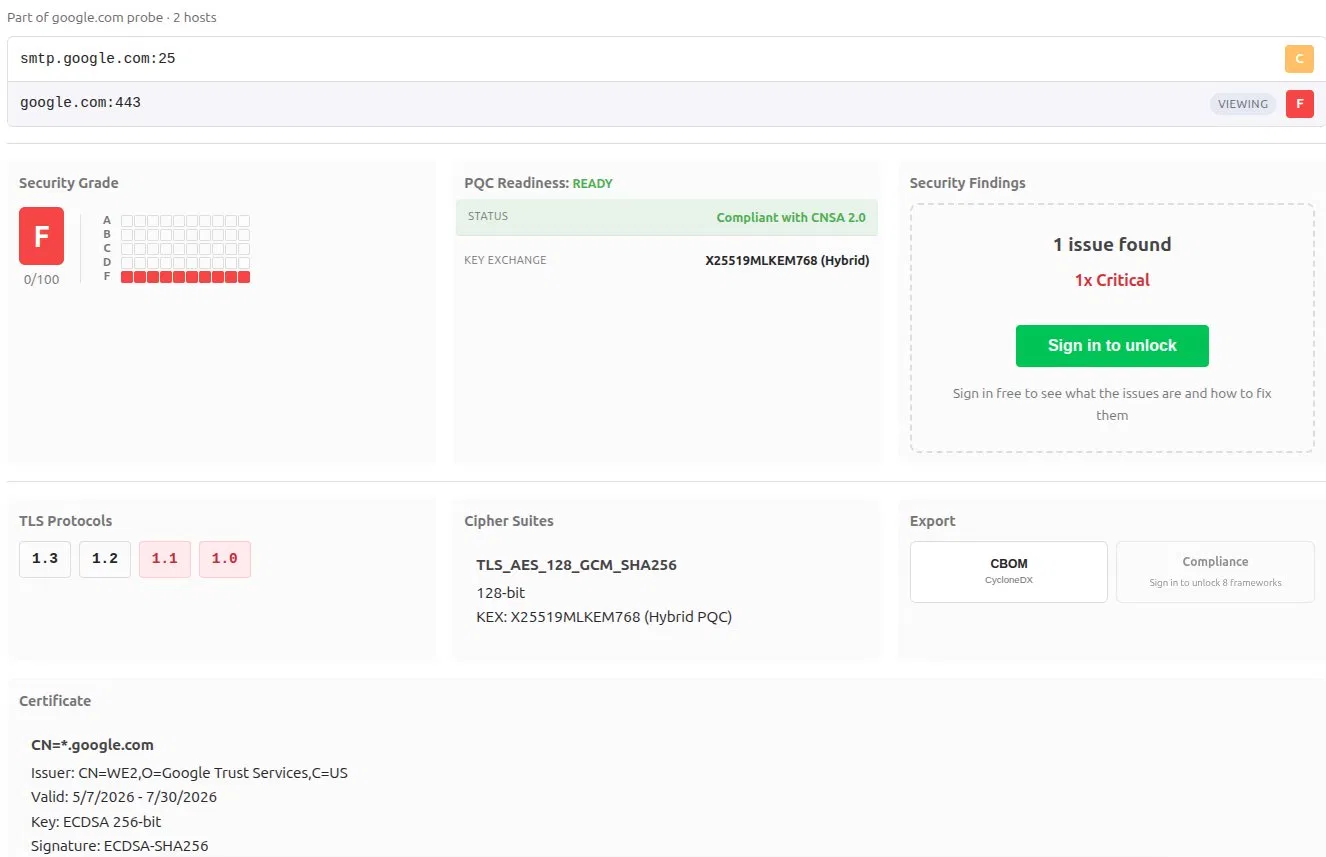

Now point an actual external posture probe at the same host. pqprobe scores google.com:443 an F. Zero points out of a hundred.

TLS 1.1 and 1.0 on the Google site. Google knows. And yet Wiz certainly doesn’t tell you what’s really happening.

Both are technically correct, because Wiz is omitting the data that would contradict their narrative. Only one of them is answering a question about preparedness. Wiz asks can this endpoint right now support a post-quantum group? At this second? Yes. That’s a supported-groups lookup. It asks the server what it is willing to do. It is the bottom floor, a cellar, for a fleeting second, with a green badge that lasts… how long? The other probe does something meaningful and scores the whole host on worst-case and keeps track: the post-quantum handshake is genuinely fine for being hybrid X25519MLKEM768, CNSA 2.0 compliant but the same host still answers TLS 1.1 and TLS 1.0. Legacy protocols a decade past their funeral, live, on the box you were just told is “PQC compliant.”

Compliant? Not only ready, not just prepared. Fully compliant!

Wiz’s tool lies about preparedness by using a capability boolean. It is structurally blind, because a capability check is not about compliance posture. Support is not preparedness. The reassurance their report is selling cannot see the thing that actually would get you breached!

And note the asterisk on their own tester says they are offering you data cached up to 24 hours. A point-in-time check is bad enough, but they also are a day stale, presented as ready. Preparedness means tracking drift over time, everywhere it’s reachable, not a green light from yesterday.

Now the part that really chaps my hide.

Flipping on the hybrid X25519MLKEM768 handshake is easy right up until it isn’t. It changes the size of the bytes on the wire. A classical X25519 client key share is 32 bytes. The hybrid share is 1,216 bytes (exactly 1,184 for the ML-KEM-768 encapsulation key, 32 for the X25519). The calculation is not a tweak. That is a ClientHello that no longer fits inside a single packet.

For decades, one segment is all you needed, and a great deal of network equipment was built by people who quietly assumed it always would. Load balancers. Inspection appliances. The TLS-terminating box three hops upstream that nobody on the team can remember where it is or knows how to login. When the hello suddenly arrives split across two packets, an appliance that assumed one packet does not negotiate gracefully downward. It cannot read far enough into the message to do anything graceful. It drops the connection.

Boom. Not solved.

That is a huge failure mode risk that lives outside the Wiz narrow view. There is no clean “PQC not supported, falling back to classical.” There is no crypto error in the log to grep for. There is a connection that completed yesterday and hangs today, where the only thing that changed was the no-op Wiz’s thought leadership promised you was solved. You push the easy button, the lights go dark, and nothing anywhere tells you your post-quantum upgrade just punched itself in the face.

Some remember the last time we changed the shape of a handshake. It feels like yesterday. Maybe that’s just because my network’s nose is still sore.

When browser vendors began testing TLS 1.3 in early 2017, the results were alarming. A significant share of connections failed the instant a browser advertised the new version. Servers rejected it. Firewalls, load balancers, embedded appliances, etc. saw a handshake that didn’t match the shape they’d hard-coded and threw it away. It even had a name: protocol ossification. The flexible parts of the protocol had sat unchanged so long they became constants preventing flex.

The fix was a mask, a hack. TLS 1.3 was made to lie about itself with a fake 1.2 version number in the clear, a bogus session ID, a dummy ChangeCipherSpec record. The ossified box would let the thing it couldn’t see pass. Open sesame. That shipped on by default, in OpenSSL and everywhere else, and it is still on. It is called middlebox compatibility mode, and it exists because “just update the software” burned an entire industry for four years.

Mozilla measured it at the time against a controlled Facebook endpoint: the honest TLS 1.3 handshake failed noticeably more often than the one wearing the 1.2 costume. The update was neither clean nor easy.

PQC won’t break things the way 1.3 did. After all, 1.3 changed the handshake’s shape, while PQC changes its size. But it lands on the very same ossified problem.

So the deep trouble with Wiz unilaterally declaring the problem solved is that it tells everyone to stop looking exactly where they most need to look. The library version is the one thing their overpriced asset inventory can see. The split-ClientHello-intolerant appliance is the one thing it cannot with a third-party, sitting in the path, in nobody’s software bill of materials, invisible until the hello gets too big. You do not find it by counting installed packages. You find it by probing the wire on the route the client actually takes, and by watching whether negotiation keeps succeeding over time.

That’s the whole reason external, continuous probing exists and agent-based crypto-inventory doesn’t replace it: capability is not deployment, deployment is not negotiation, and a snapshot of what you installed says nothing about whether the box sitting between endpoints will let it through. [PQ]probe measures what the handshake actually does on the path to your origin, the precise spot where “just update the software” goes to die quietly.

The honest message is buried in Wiz’s own report and contradicted by the marketing around it: the standard is finished and the migration has barely started, because the migration was never the standard. It was always the wire, and the ossified middle, and the long tail of equipment that works because it hasn’t really been tested.

Solved. We’ll see how solved it feels when turning on a handshake bogarts the hardware, the software, or both.